170+ AI Models, Running Locally Private AI for iPhone, iPad, Mac, and Vision Pro

Run Llama, Gemma, DeepSeek, and 170+ other models on your own hardware. Multi-agent teams, Knowledge Libraries, voice transcription with speaker ID, text-to-speech. No accounts, no tracking, no data leaves your device.

Available for iPhone, iPad, Mac, and Vision Pro

Unparalleled Freedom & Power

Designed for power users who demand native performance, choice, and absolute privacy.

170+ Local Models & 19 Cloud APIs

Never get locked into a single ecosystem. Run Llama, DeepSeek, and Gemma directly on your device with dual GGUF and MLX engines. Need massive reasoning capabilities? Instantly switch to OpenAI, Anthropic, or 19+ other cloud APIs when connected.

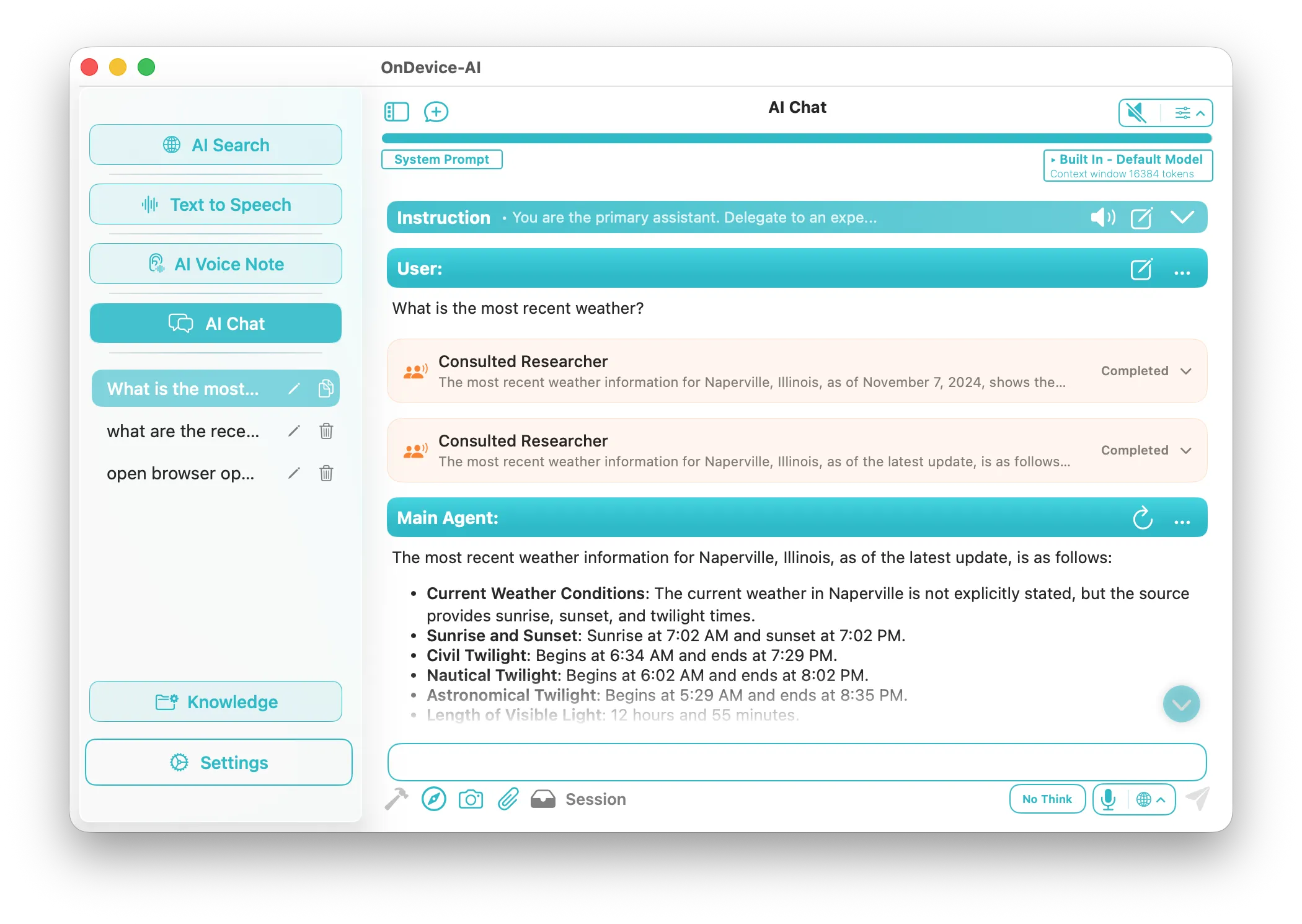

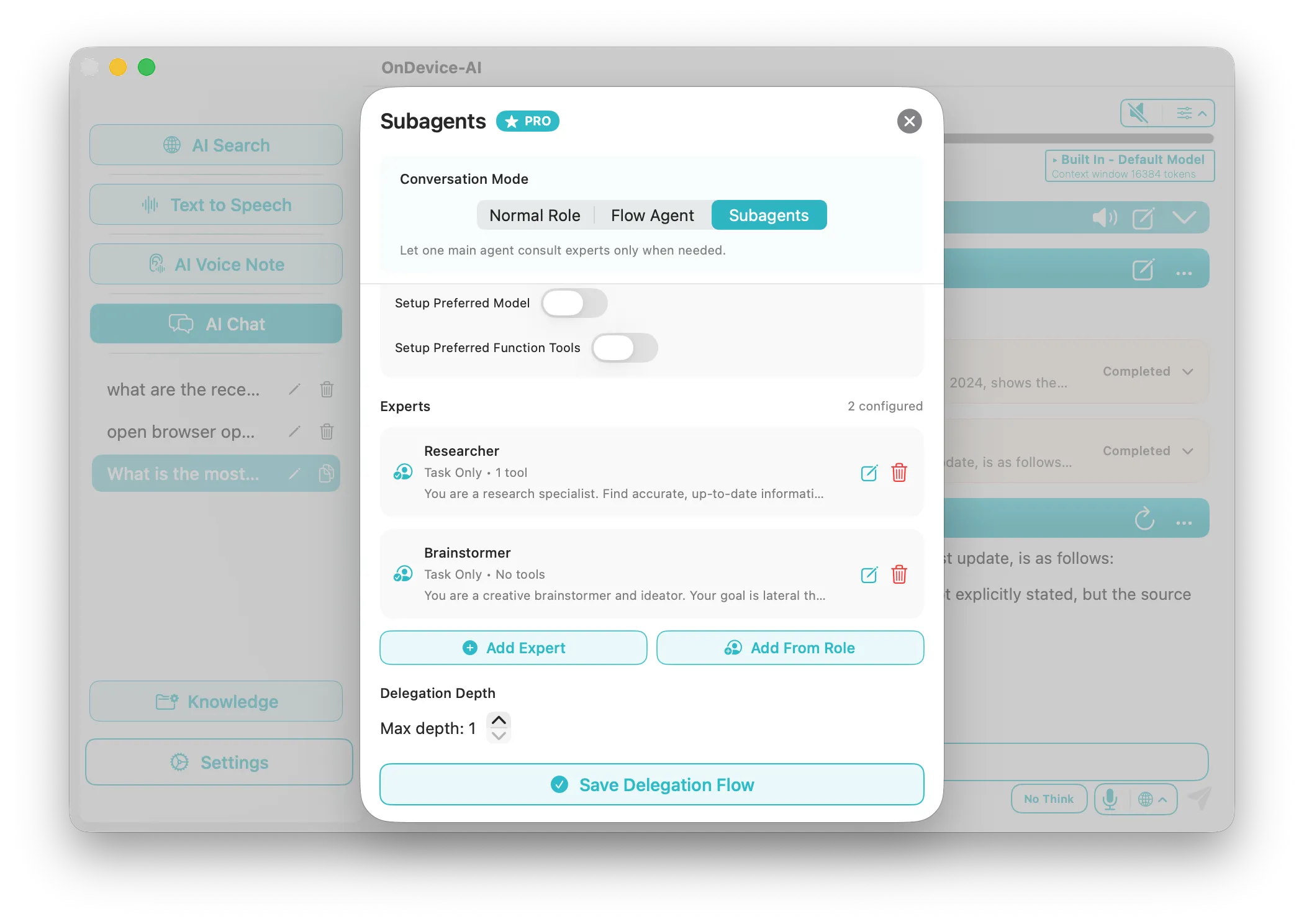

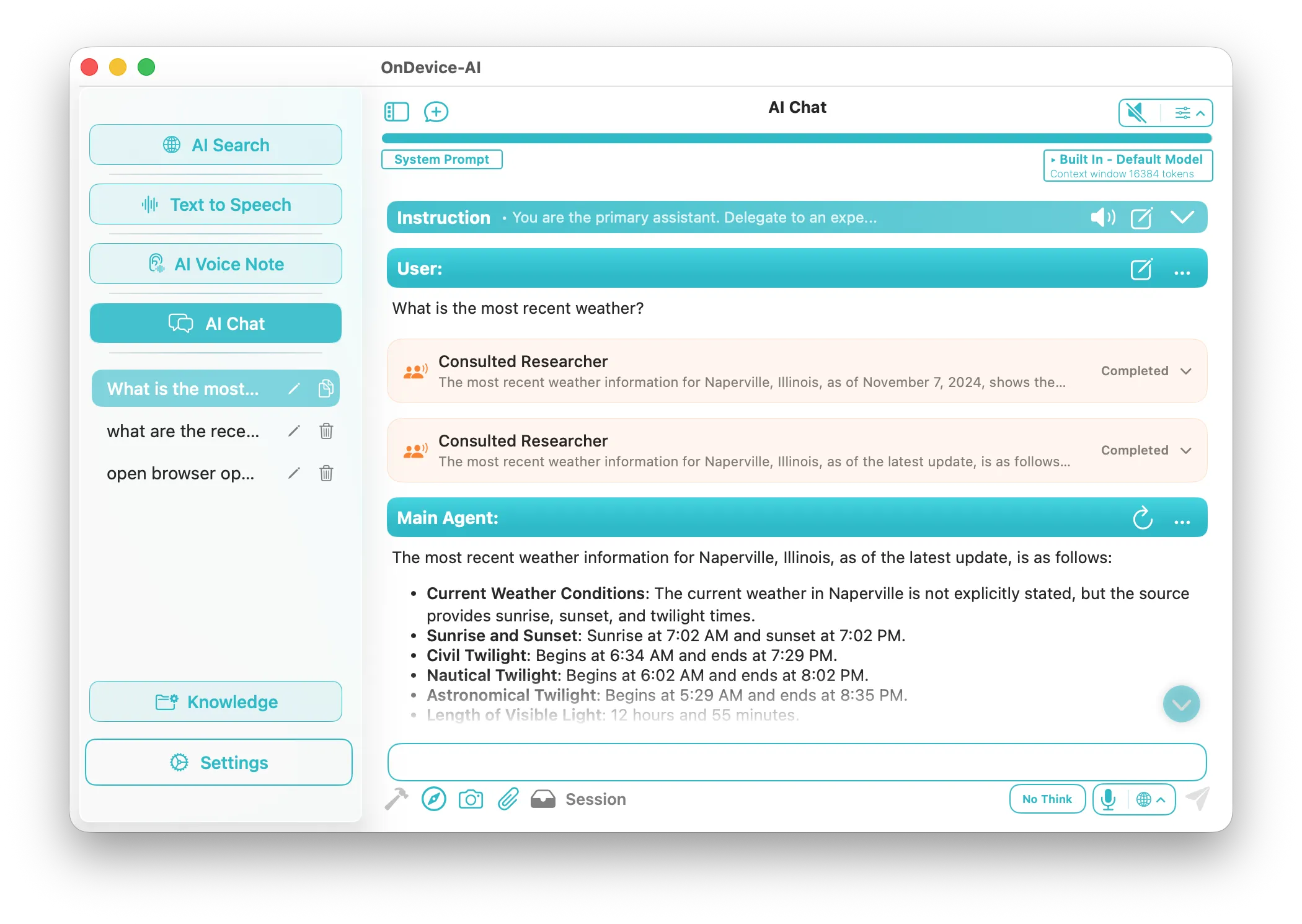

Multi-Agent Teams

Orchestrate specialized AI agents that collaborate autonomously. Assign distinct roles and models for complex tasks.

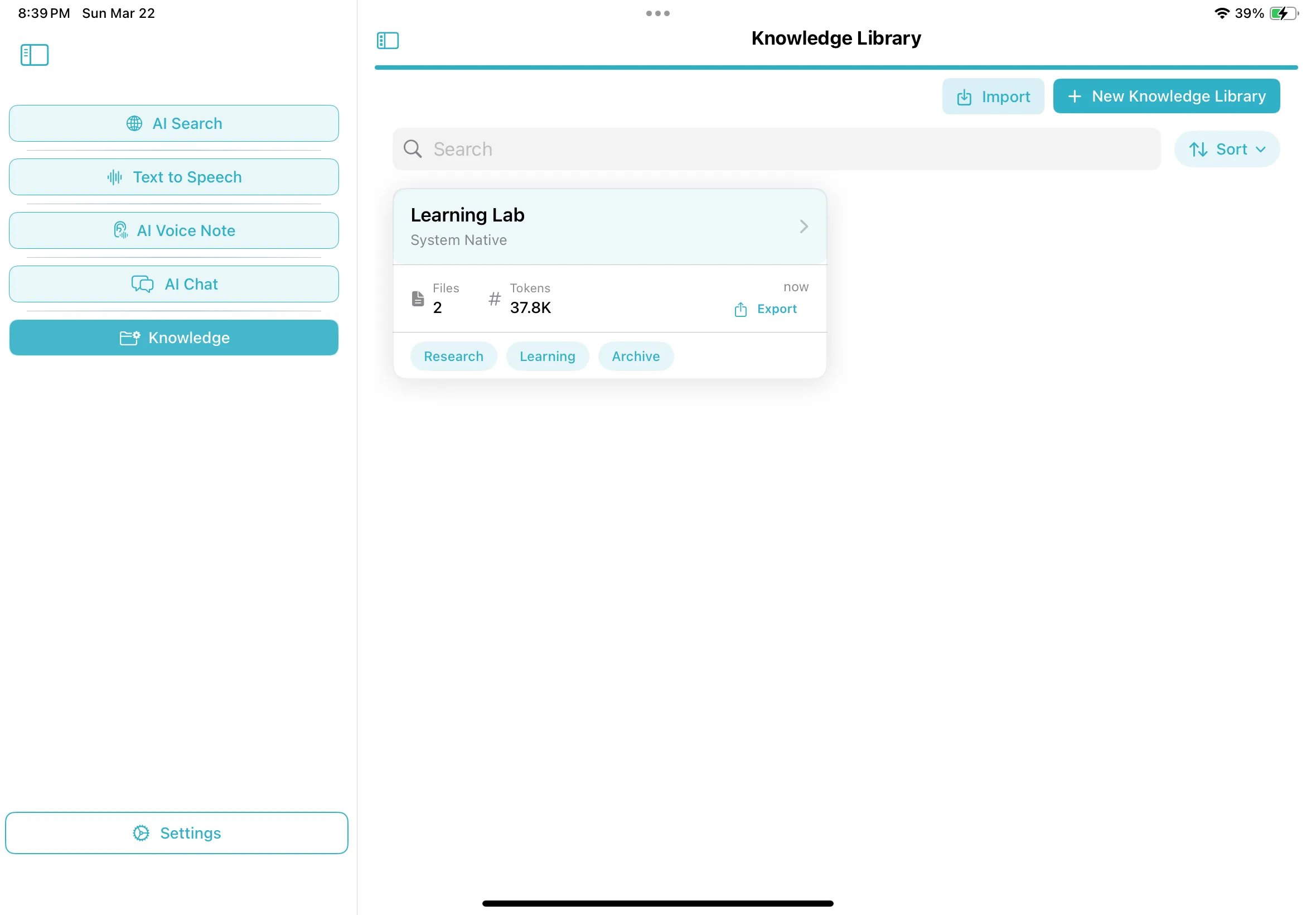

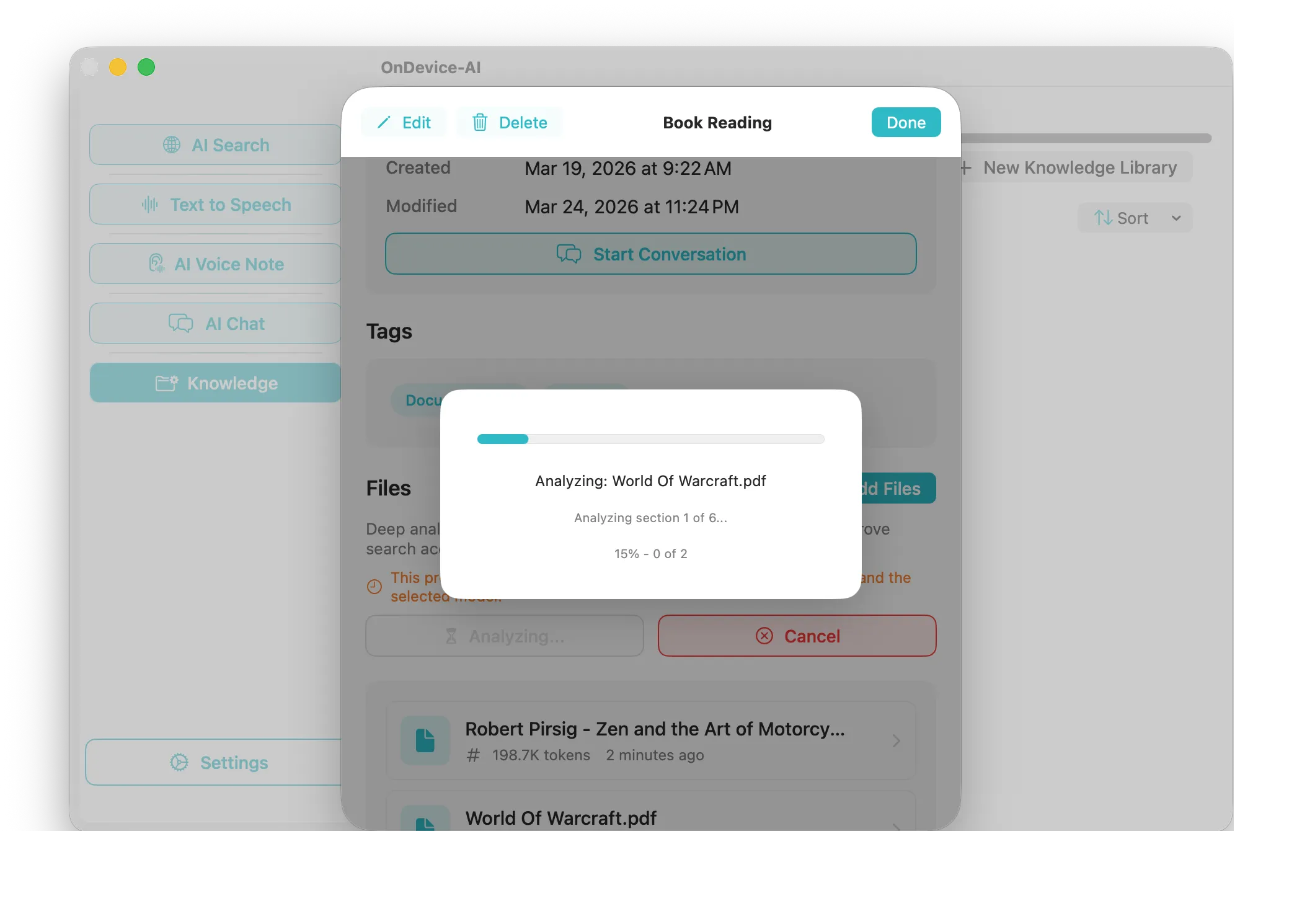

Knowledge Libraries

Your documents, your memory. Import PDFs and notes to create focused contexts. The AI retrieves answers securely locally.

Pure Native Ecosystem

One seamless app across macOS, iOS, iPadOS, and visionOS. Use your Mac as a local inference server while interacting effortlessly from your iPhone. No sluggish web wrappers or electron apps—just lightning-fast, native SwiftUI performance universally.

What Can On Device AI Do?

Powerful AI features right on your device, with no network dependency. Say goodbye to privacy concerns.

Subagents & Workflow Automation

Multiply your productivity. Deploy specialized agents (e.g., Researcher, Analyst, Writer) that consult each other to tackle complex projects simultaneously across your devices.

Learn more

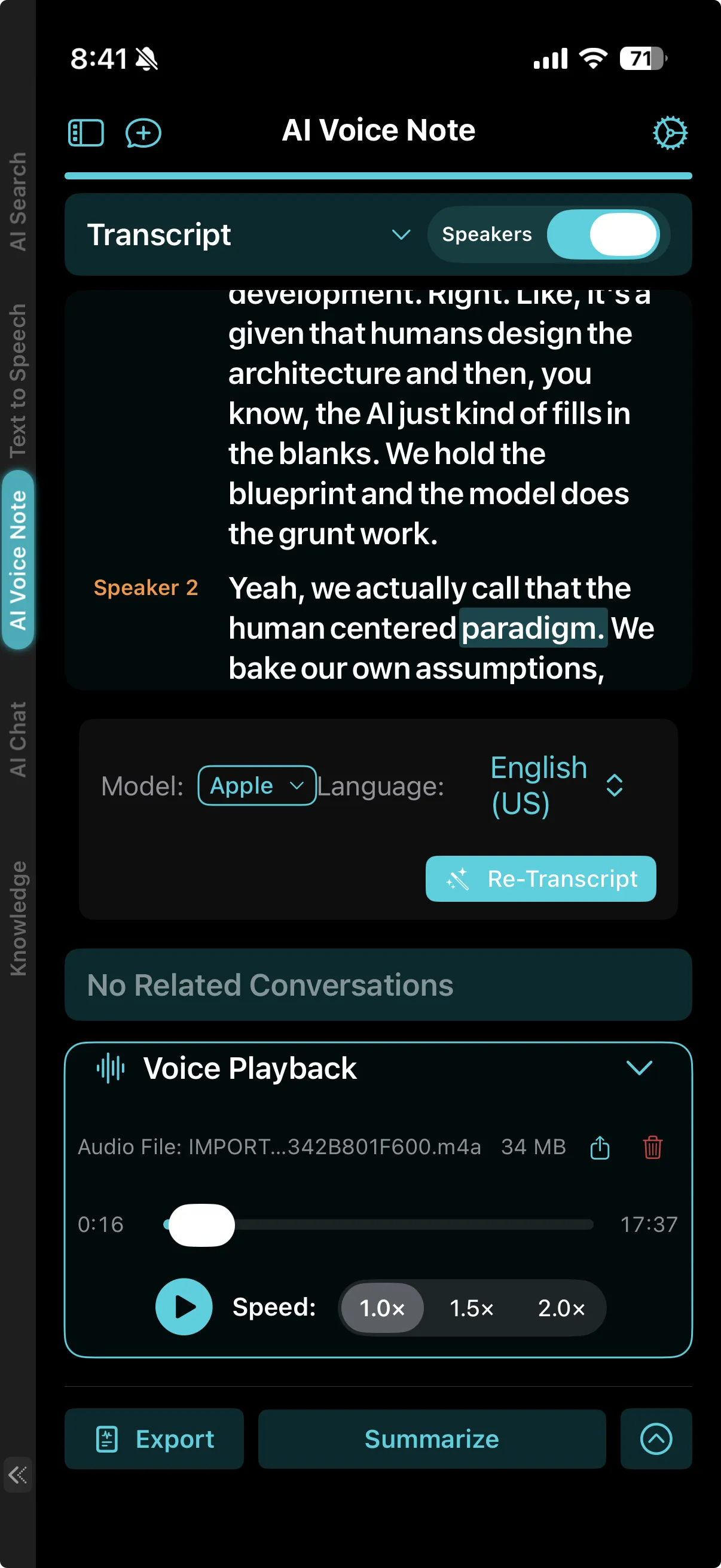

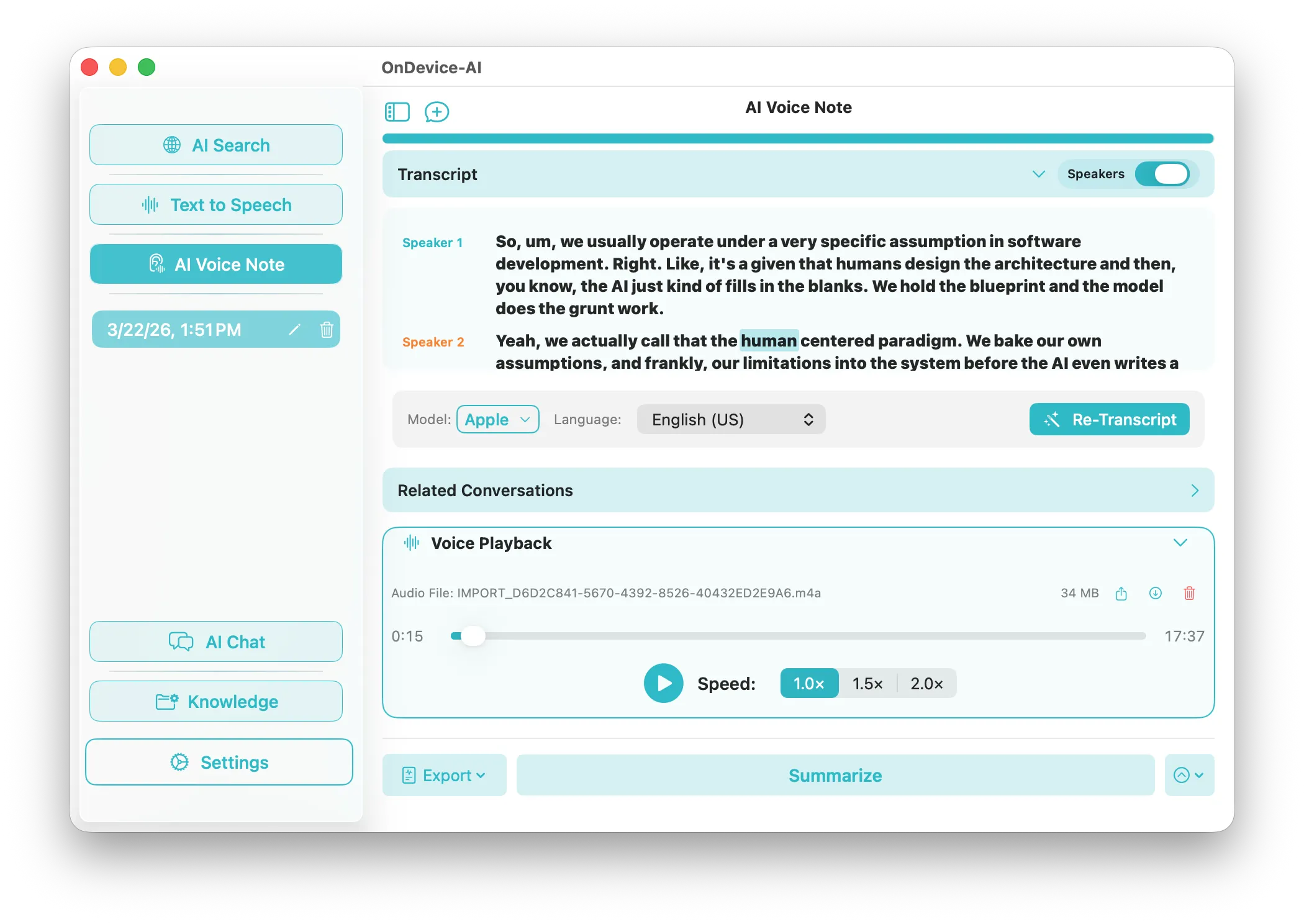

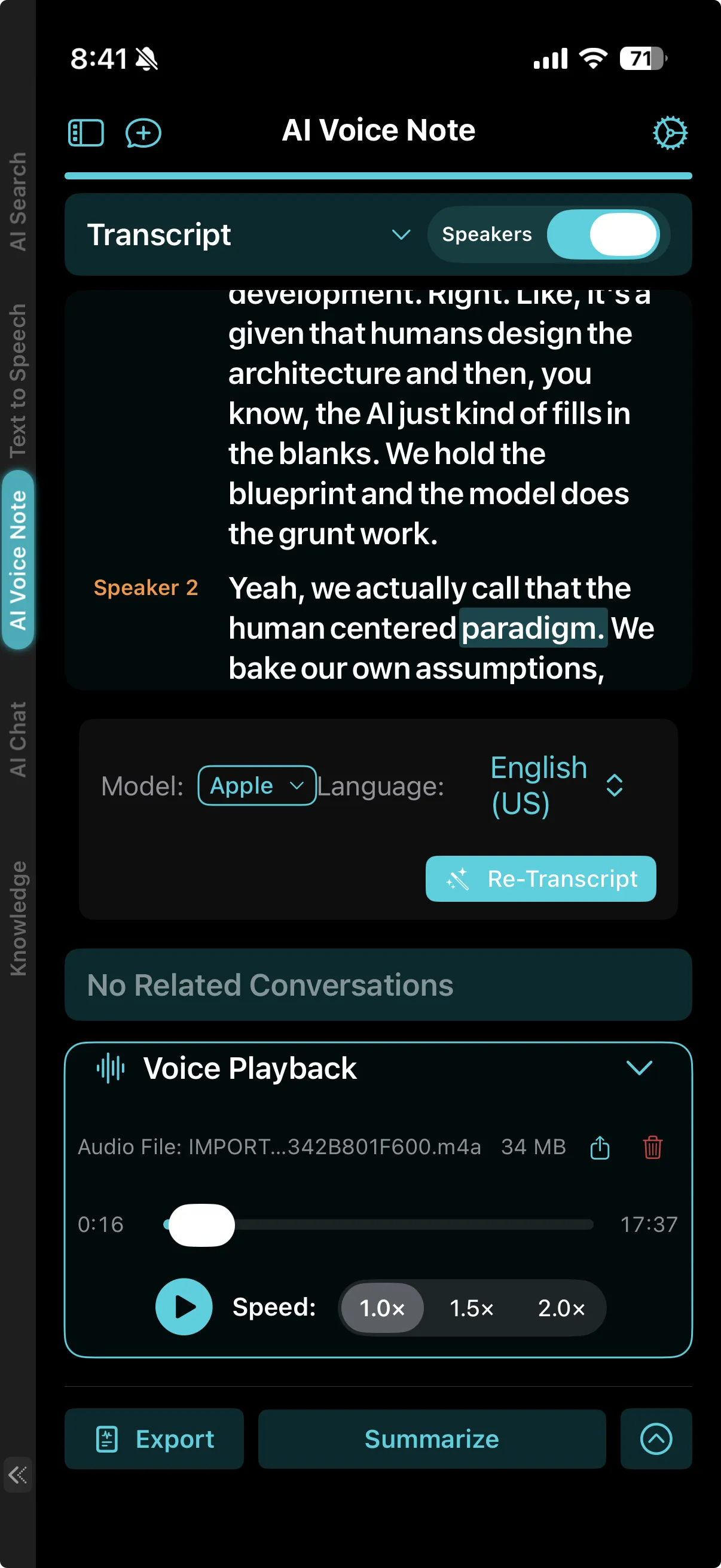

Voice Notes & Transcription

Never miss a detail. Quickly capture, transcribe, and summarize meetings with flawless, on-device transcription. Real-time speaker identification (diarization) keeps your notes perfectly organized.

Learn more

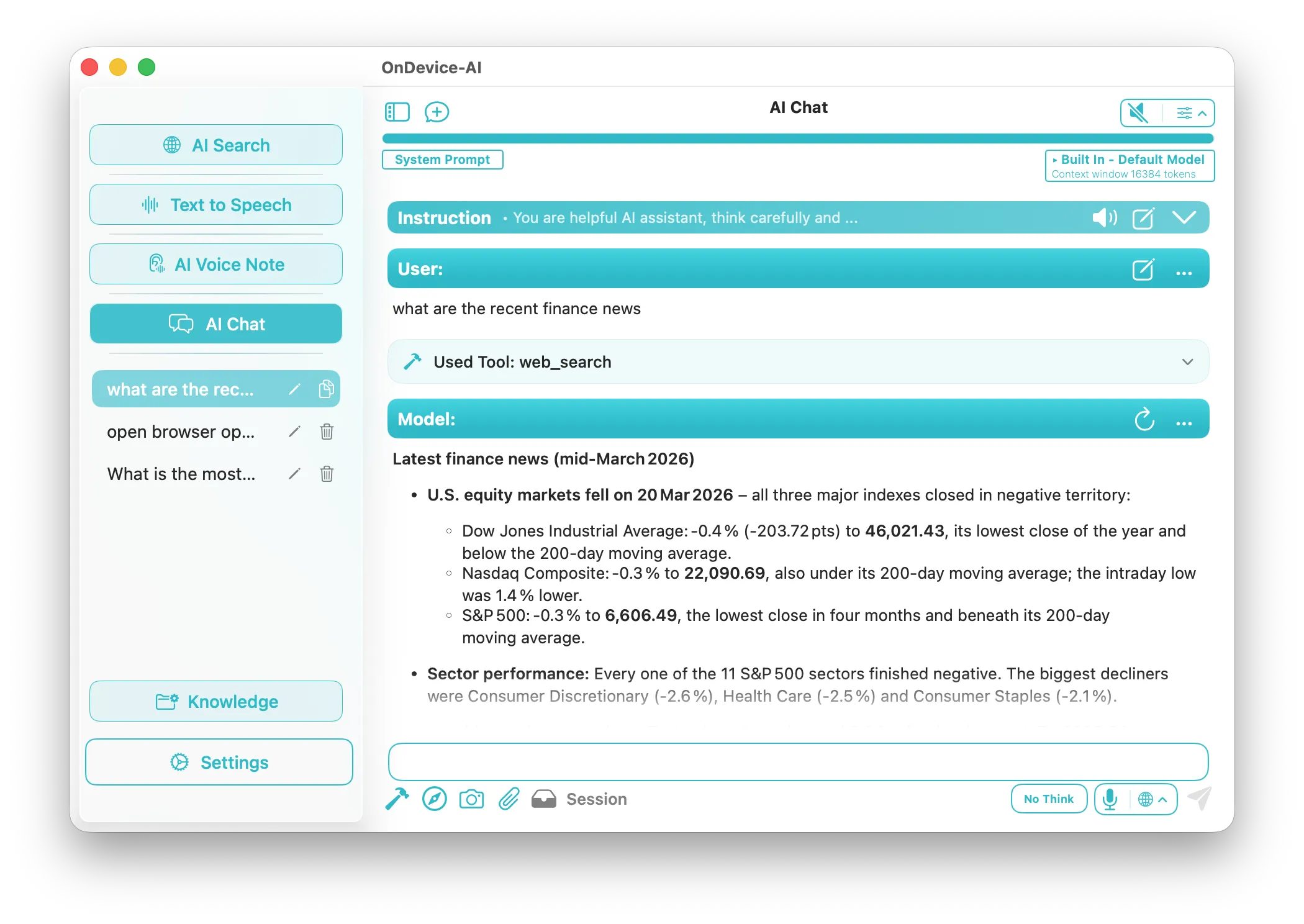

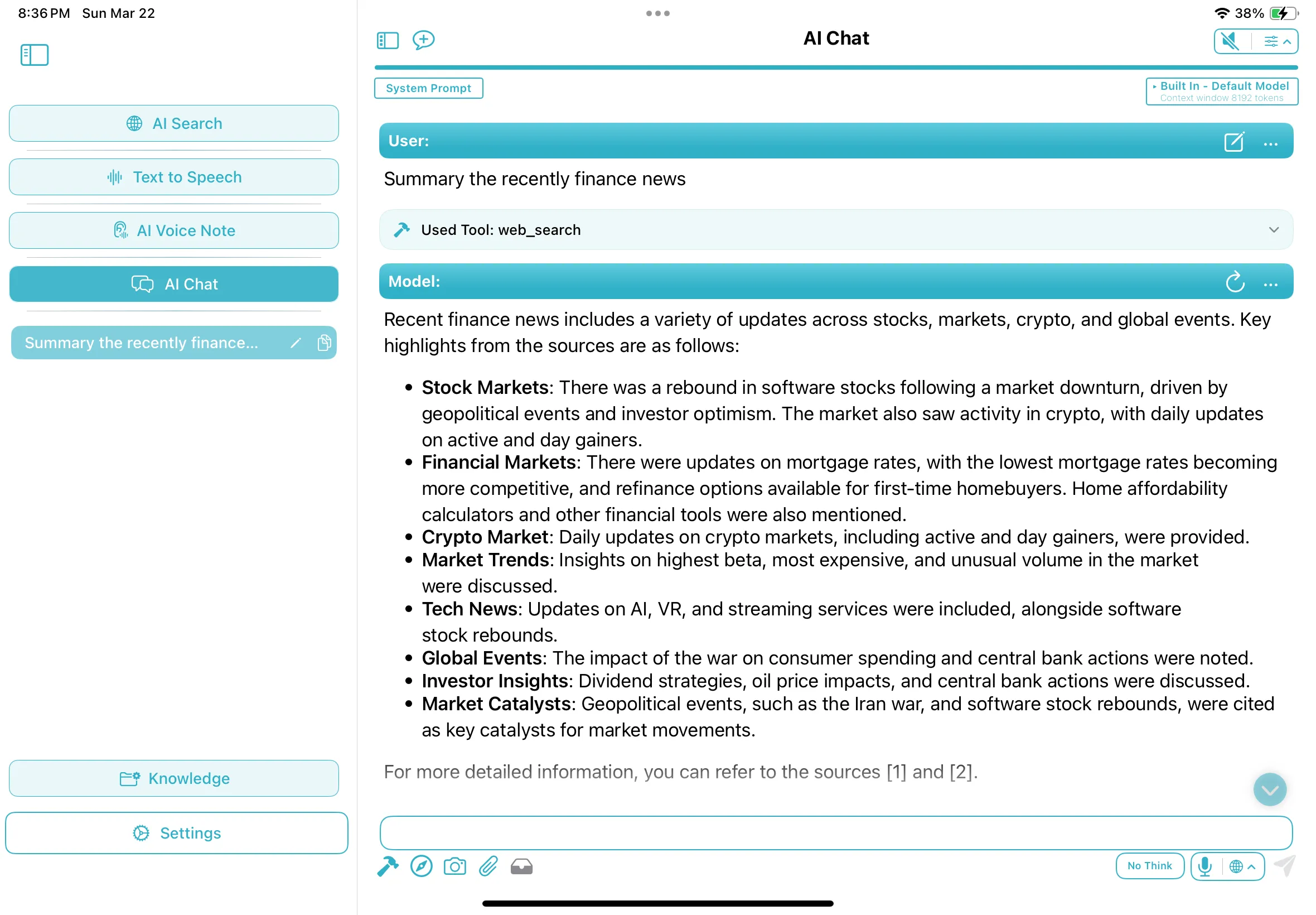

Active Tools & Web Search

Equip your AI with live knowledge. Let your agents fetch real-time financial news, run calculators, and search the web securely directly from your iPad or Mac, all with full privacy.

Learn more

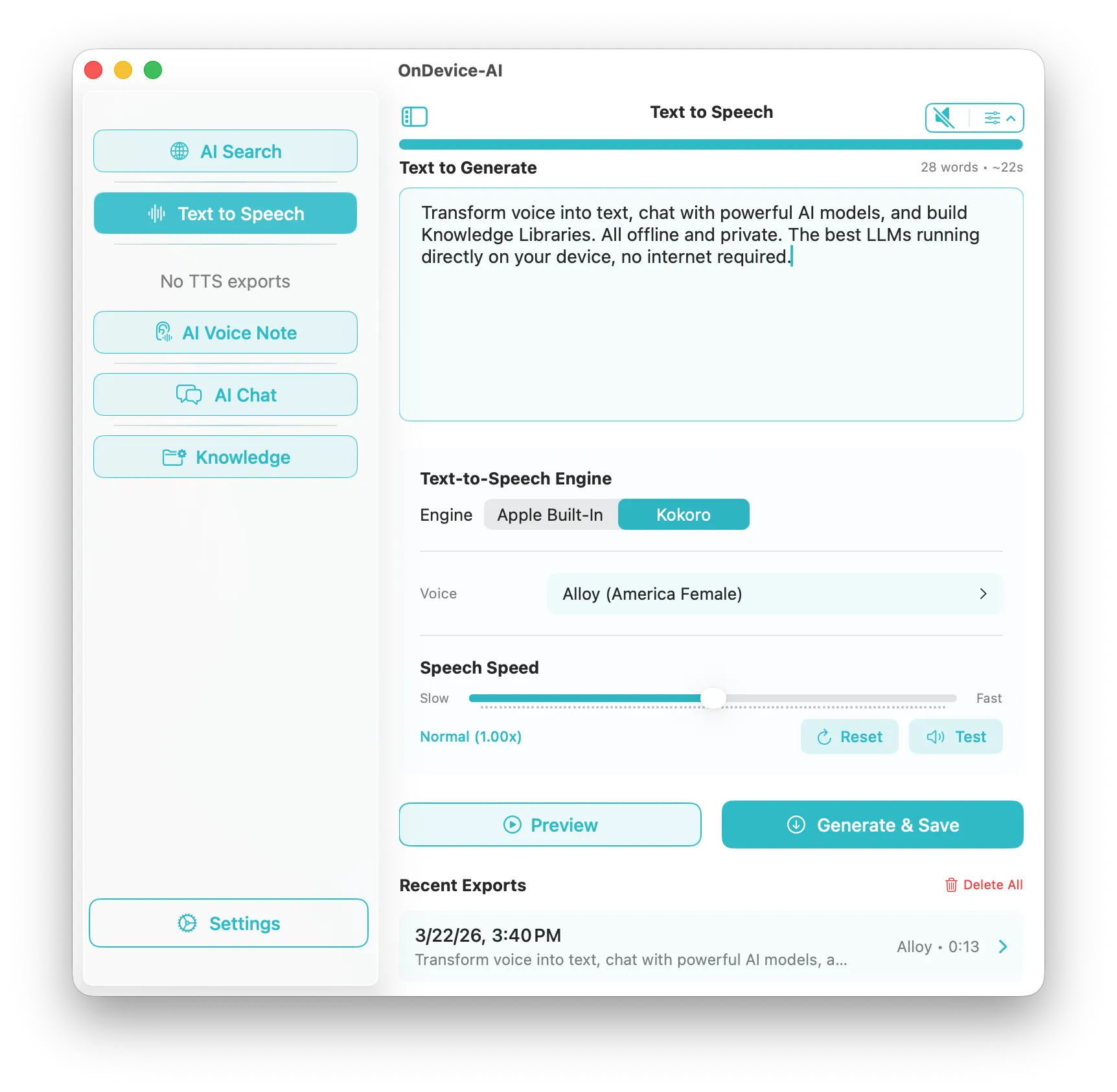

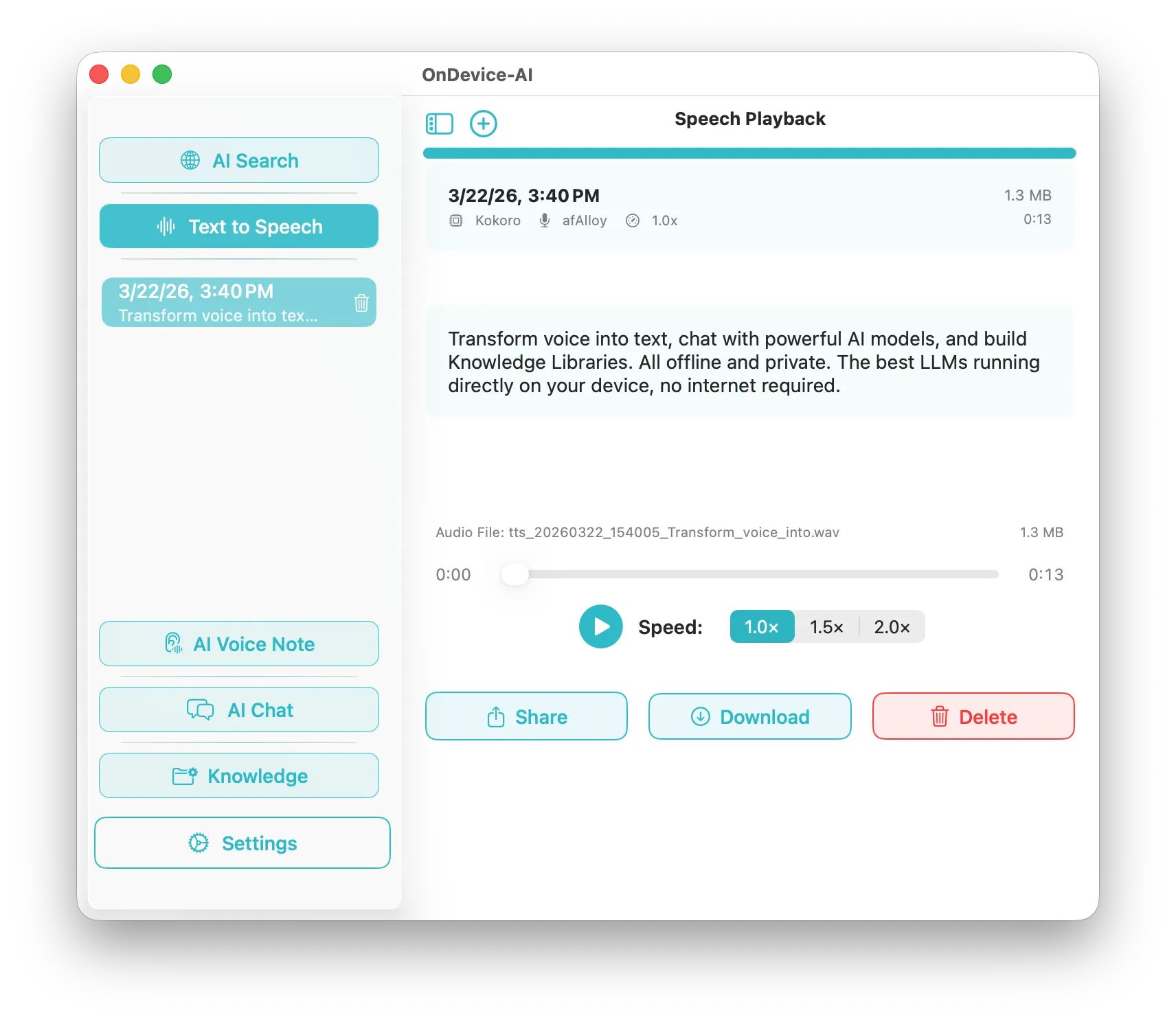

Professional Text-to-Speech

Generate natural, human-like speech using the powerful Kokoro engine. Create lifelike audio content offline natively on your machine to narrate PDFs, books, or articles without bandwidth limits.

Learn more

Personal Knowledge Libraries & Files

Build dedicated offline memory spaces for your projects. Import PDFs and notes, and let your AI securely read, search, and synthesize answers directly from your documents.

Learn moreYour Data. Your Choice.

Run leading models locally with zero data leakage, or opt-in to cloud providers when you need maximum power.

Traditional Cloud AI

- Privacy risk — data leaves your device

- Limited control over your data

- Internet connection required

On-Device AI

- 100% private — data never leaves your device

- Fully customizable models and roles

- Works completely offline

Why Is On Device AI More Private?

All processing stays within your device boundary. No accounts, no tracking, no data collection.

-

Zero Data Leakage

Your conversations, documents, and voice recordings never leave your device during AI processing.

-

Complete Anonymity

No accounts required, no telemetry, no usage tracking. You own your data completely.

-

Offline First

Core features work without internet. Cloud providers are optional and require your explicit consent.

Spatial Intelligence on Vision Pro

Immerse yourself in your AI workflows with breathtaking native visionOS support.

Available on All Apple Platforms

One app, optimized for every device in your ecosystem.

iOS

iPhone & iPad

- Voice transcription on the go

- Siri Shortcuts integration

- Camera & Vision model support

- Background recording

macOS

Apple Silicon Macs

- Maximum performance & large context

- Menu bar & global hotkeys

- IM integration (Discord, Slack, Telegram)

- Serve as remote inference server

visionOS

Apple Vision Pro

- Immersive AI experience

- Spatial computing ready

- Hand gesture controls

- Private AI in mixed reality